Kind: kubernetes in docker 2026-02-14

I’ve meaning to upgrade to an even easier to manage kubernetes cluster for testing and development than the one you can get from microk8s. Today I’m using kind (kubernetes in docker), this is specifically meant for testing and local development machines.

Installing Kind

These days I’m on nixos so I went with a simple nix-shell -p kind -p kubectl to setup the tool.

Example config for 4 workers

kind: Cluster

apiVersion: kind.x-k8s.io/v1alpha4

nodes:

- role: control-plane

- role: worker

- role: worker

- role: worker

- role: worker

❯ kind create cluster -n test --config 4-worker-cluster.yaml

Creating cluster "test" ...

✓ Ensuring node image (kindest/node:v1.34.0) 🖼

✓ Preparing nodes 📦 📦 📦 📦 📦

✓ Writing configuration 📜

✓ Starting control-plane 🕹️

✓ Installing CNI 🔌

✓ Installing StorageClass 💾

✓ Joining worker nodes 🚜

Set kubectl context to "kind-test"

You can now use your cluster with:

kubectl cluster-info --context kind-test

Thanks for using kind! 😊

We verify what this has done to our config

(more...)For a while now I’ve been using Bottlerocket as the base OS for instances in the cloud (i.e. the AMI), instead of using Amazon Linux or Ubuntu etc. In our workload we don’t really have much use for local storage until recently so I finally invested some time in figuring out how to actually make use of the local SSDs where available (usually this type of storage is called instance-store in AWS).

(more...)I set up a local kubernetes cluster using microk8s just as a development/home cluster and as part of it I ended up connecting to the kubernetes dashboard application using a service account token, as recommended

$ microk8s kubectl create token default

(...token output...)

This process lead me to a few questions:

- What is that token?

- Where does it come from?

- Where is it stored?

- Does it expire?

Essentially, “wait how does the whole token authentication thing work?”

(more...)But why?

Whatever you think about Kubernetes, it is here to stay. At work it’s replaced all our custom orchestration and ECS usage. It solves infrastructure problems in a common way that most Cloud providers have agreed to, which is no small feat.

So why would you want to install it locally anyway? For work your company will most likely provide you access to a cluster and give you a set of credentials that somewhat limit what you can do. Depending on your interests that won’t be enough to properly learn about what is going on under the hood. Getting familiar with how Kubernetes stores containers, container logs, how networking is handled, DNS resolution, load balancing etc will help remove a huge barrier.

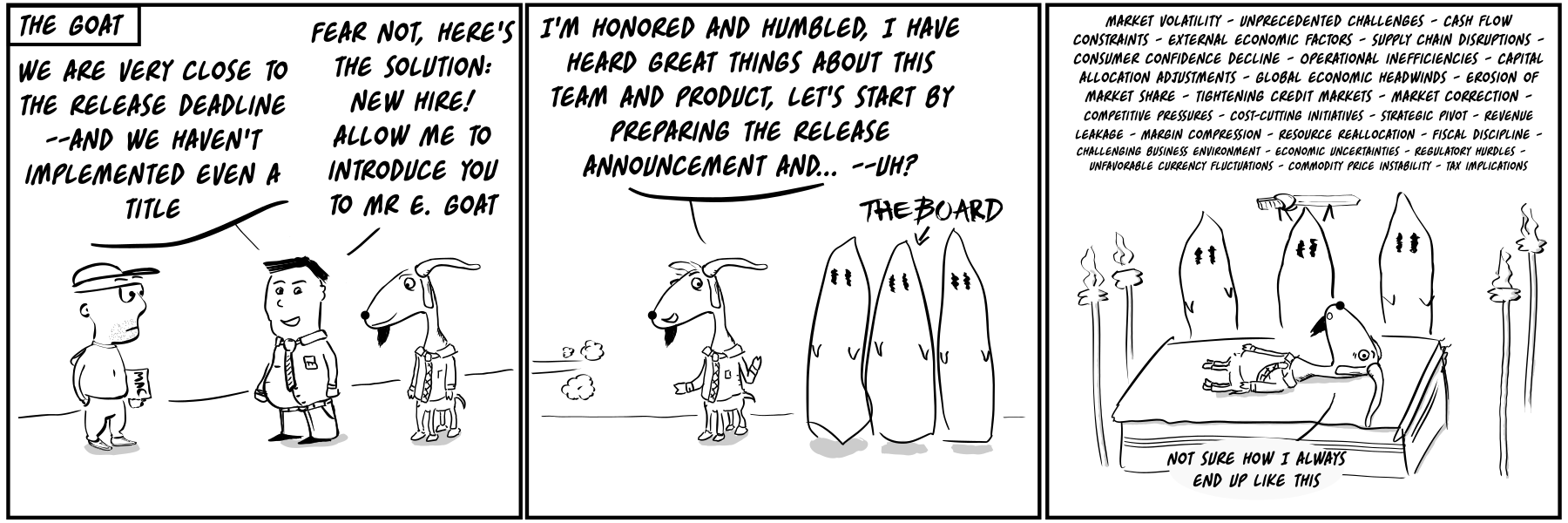

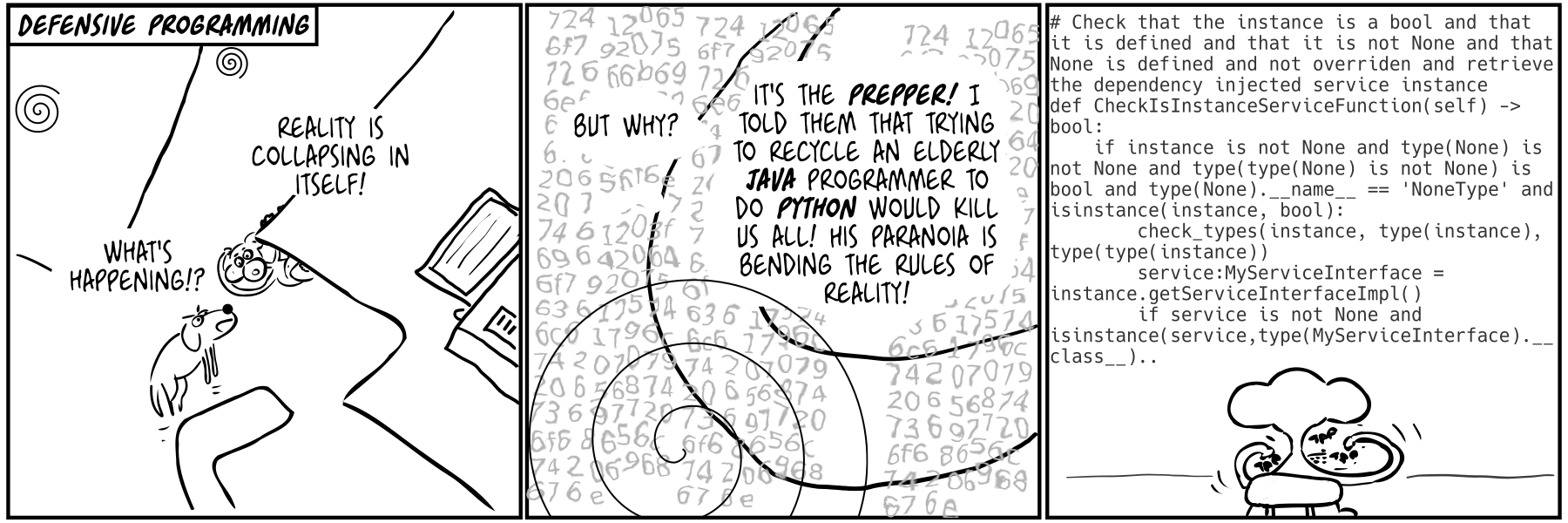

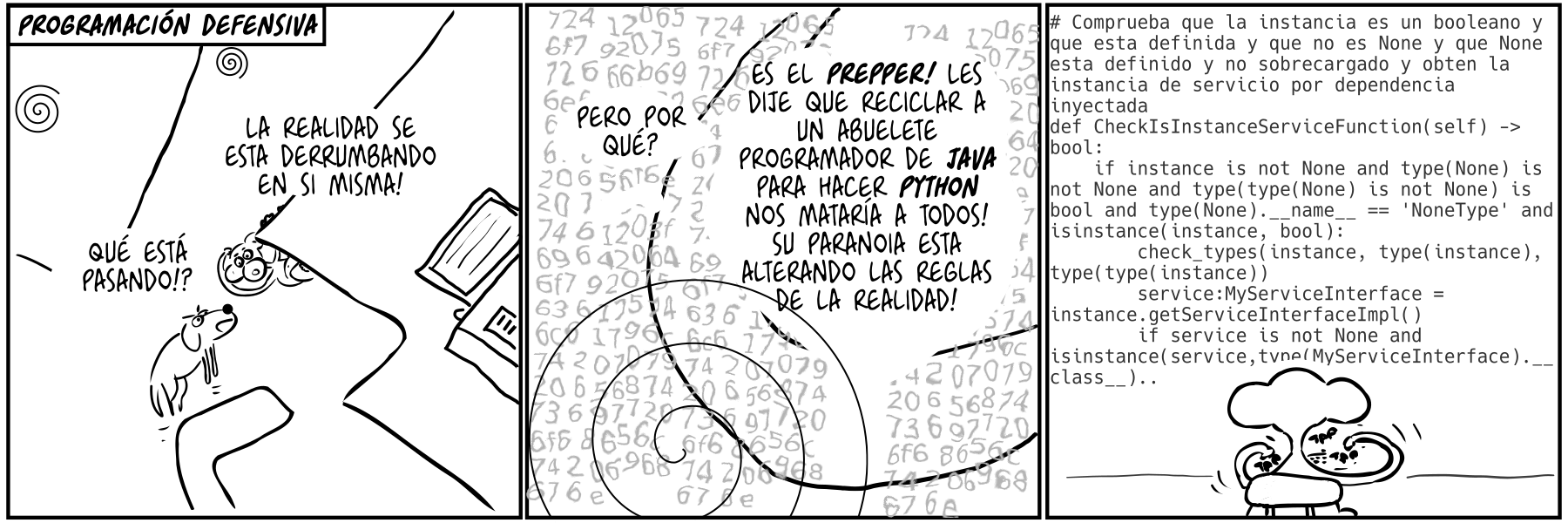

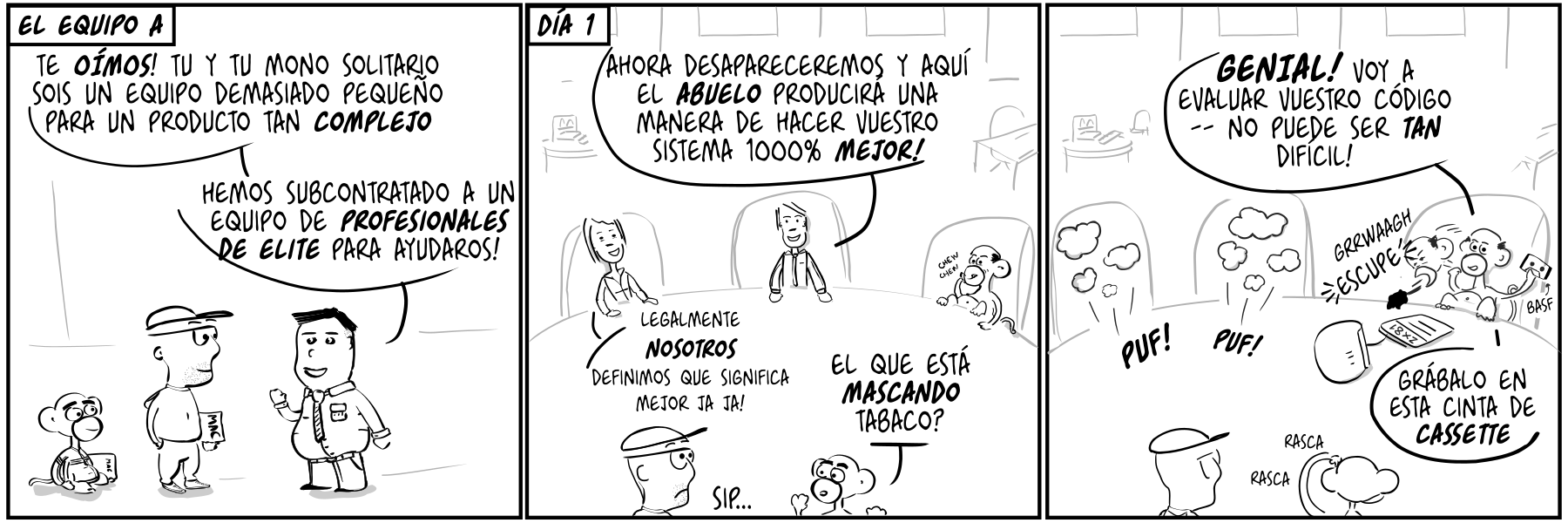

(more...)Paul the innovator (84): Defensive programming Paul the innovator (84): Programación defensiva 2022-07-07

When transmitting complex information I find having some kind of diagram extremely helpful. Sometimes they are there just to have something to look at and point, sometimes they are key to following a complex flow.

Over the years of trying lots of different tools, still seems to me that what I would call ‘hand drawn’ diagrams are best - but I don’t mean actually hand drawn, I’m quite bad at whiteboard drawing but give me a tool like Omnigraffle and I’ll make the nicest diagrams. I’ll throw in some pastel colors even.

(more...)Wacom on Linux: my 2020 setup with aspect ratio fix, pan to scroll, drag, multitouch and more 2020-08-27

I’ve been a very big fan of small Wacom tablets as a replacement for a mouse. I’ll go through my current setup for Linux in case it can help anyone out there.

I started using a Wacom tablet for drawing but found it an excellent replacement for a mouse and moved to exclusive use when I started having symptons of RSI. The change from having the wrist on top of a mouse to having it gripping a pen made a big difference and my problems went away.

(more...)